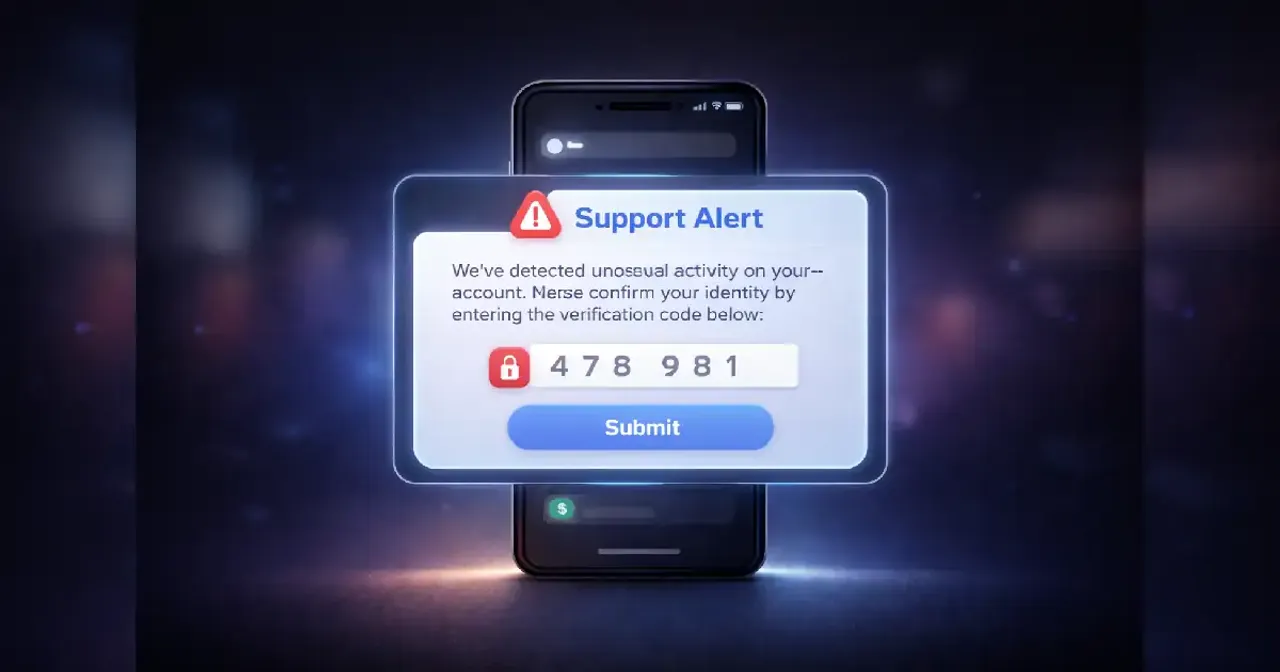

Fake support messages are becoming so polished that many users mistake them for legitimate help, responding quickly and sometimes sharing verification codes without hesitation. The message often feels routine an alert about unusual activity, a request to confirm a login, a polite reminder to secure your account. Nothing dramatic. Nothing obviously suspicious. Just a tone of quiet urgency that blends seamlessly into daily digital life.

That familiarity is precisely what makes it effective.

We have grown used to receiving automated security notifications. Banks send one-time passwords. Social media platforms request identity verification. Delivery apps confirm orders. In this environment, a message asking for a code doesn’t feel strange. It feels procedural.

The deception lies not in complexity, but in imitation.

The Illusion of Authority

Most fraudulent “support” outreach borrows credibility from trusted brands. The logo looks correct. The language mirrors official communication. The sender name appears familiar at first glance. Even the formatting resembles real notifications.

Authority triggers compliance. When something appears to come from a bank, payment service, or major tech platform, our instinct is to cooperate. We assume the system is protecting us.

The psychology behind this is simple. People respond differently to perceived authority figures. In the physical world, uniforms and official badges signal legitimacy. Online, design and tone play the same role.

The message may say your account is temporarily restricted or that unusual activity was detected. It might include just enough detail to sound plausible, but not enough to invite scrutiny. The goal isn’t to overwhelm you with informationit’s to create a narrow window of action.

And that window often includes a request: “Please share the code sent to your phone.”

Why Verification Codes Feel Harmless

One-time passwords and verification codes are meant to enhance security. We’re told they protect our accounts from unauthorized access. Over time, they’ve become part of routine Digital hygiene.

Because of that familiarity, the request to “confirm your code” doesn’t automatically raise alarm.

But verification codes are designed to confirm identity. When shared with someone elseespecially in real timethey can grant access to accounts that would otherwise remain secure.

The brilliance of fake support Messages lies in their timing. Often, the scammer initiates a login attempt themselves. The legitimate service sends you a real security code. Almost immediately, the fraudulent message asks you to share it to “prevent account suspension” or “verify ownership.”

Technically, the code is real. The context is not.

That subtle manipulation makes the situation confusing. Users may believe they are cooperating with security measures when they are actually bypassing them.

Emotional Pressure Without Drama

Unlike older scams that relied on exaggerated threats or promises, modern fake support messages are calm. The language is measured. The urgency is implied, not shouted.

A typical message might say: “We detected unusual activity. If this wasn’t you, please confirm your identity.” It feels protective, almost reassuring.

This tone reduces resistance. Loud, aggressive messages can trigger suspicion. Quiet professionalism lowers it.

There is also the fear of inconvenience. Account lockouts are frustrating. Losing access to banking, email, or social platforms can disrupt daily routines. Faced with that possibility, many users prioritize quick resolution over careful verification.

The scam counts on that impulse.

The Shift to Direct Conversations

Another evolution in deceptive outreach is the move from static emails to interactive messaging. Fraudsters increasingly use SMS, chat apps, or even phone calls that feel conversational.

Instead of a generic email, you might receive a message that addresses you by name. If you respond, the conversation continues naturally. The “support agent” answers questions politely. The tone feels human.

This back-and-forth dynamic creates a sense of legitimacy. Real support services often operate through chat. The imitation is convincing because it mirrors common experience.

The line between genuine assistance and manipulation becomes blurred.

How Everyday Habits Increase Exposure

Our reliance on instant communication makes us more responsive. Notifications interrupt meetings, dinners, workouts. We glance quickly, decide quickly, act quickly.

Speed is built into digital culture.

That speed works against reflection. When a message appears during a busy moment, the priority becomes clearing it from the screen. If it looks official and offers a fast solution, compliance feels efficient.

Additionally, data leaks and public information can make messages appear more personalized. A scammer may reference your full name, partial phone number, or recent activity. These details add credibility.

Yet they often come from publicly available sources or previously exposed databases, not direct access to your private account.

The presence of personal information strengthens the illusion of legitimacy.

Why This Matters Beyond One Code

At first glance, sharing a numeric code seems minor. It doesn’t feel like revealing a password or financial details. But in many systems, that code functions as a temporary master key.

With it, attackers can reset passwords, bypass security layers, or take control of accounts tied to email or phone numbers. From there, the impact can extend outwardaccess to other linked platforms, impersonation of contacts, or unauthorized transactions.

The consequences are not always immediate. Sometimes access is used quietly, days or weeks later.

Understanding this broader chain reaction highlights why these scams are so effective. They don’t ask for everything at once. They ask for one small step that unlocks many others.

The Role of Social Engineering

Fake support messages are a classic example of social engineeringmanipulating human behavior rather than exploiting technical flaws.

Instead of hacking into systems, scammers persuade users to hand over the keys.

This approach is efficient. Security technology can be complex and expensive to breach. Convincing a person to cooperate is often simpler.

The success of this tactic relies on predictability. Humans tend to trust familiar structures. We expect support teams to request confirmation. We believe security alerts should be addressed quickly.

Fraudulent actors design their messages around these expectations.

The Future: More Personal, More Polished

As artificial intelligence tools improve, so does the quality of deceptive communication. Language errors are less common. Messages can be tailored to regional styles or even individual behavior patterns.

Voice calls that sound professional. Chat responses that adapt in real time. Even cloned voices are entering the conversation in some cases.

This doesn’t mean digital spaces are inherently unsafe. Security systems are also evolving, and many platforms warn users explicitly never to share verification codes.

Still, the sophistication of imitation continues to grow. The distinction between authentic and fraudulent communication becomes subtler.

Rebuilding Digital Confidence

Awareness doesn’t require suspicion of every message. It requires understanding how authority, urgency, and familiarity can be engineered.

The key insight is simple: legitimate services rarely need you to share a verification code with someone contacting you directly. Codes are typically meant to confirm actions you initiate yourself.

When communication feels urgent yet oddly generic, it’s worth pausing mentallyeven for a few seconds. Reflection disrupts the momentum that scammers rely on.

Digital literacy today extends beyond knowing how to use apps. It includes recognizing how easily trust can be simulated.

Fake support messages succeed because they feel routine. But routine can be examined.

Technology will continue to evolve. So will imitation. The difference between manipulation and protection often lies in awareness of context.

In the end, the most powerful defense isn’t technical complexityit’s understanding how trust is built, and how it can be borrowed Without permission.

Frequently Asked Questions

What are fake support messages?

They are fraudulent communications that impersonate customer service or technical support teams to trick users into sharing verification codes or sensitive information.

Why do scammers ask for verification codes?

Verification codes can grant temporary access to accounts or allow password resets. Sharing them can unintentionally give attackers control over your account.

Are verification codes themselves fake?

Often the code is real and sent by a legitimate service. The deception lies in who is asking for it and why.

Why do these messages look so professional?

Scammers copy official branding, tone, and formatting. Some also use advanced language tools to make messages appear authentic and error-free.

Will fake support scams become more common?

As communication tools evolve, imitation becomes easier. However, awareness and stronger platform safeguards are also advancing alongside these tactics.